White House Eyes New AI Model Reviews Amid Cyberattack Concerns

May 10, 2026

Alex - aiToggler Team

Reviewed by a two-legged human.

It’s not every day you see the White House floating new rules for artificial intelligence, but here we are. If you’ve been watching the AI world, you know government regulation always seems to be a step behind the tech itself. Now, with cyberattacks and AI-powered threats getting more sophisticated, the U.S. government is hinting it might get more hands-on.

What’s being discussed: government reviews for new AI models

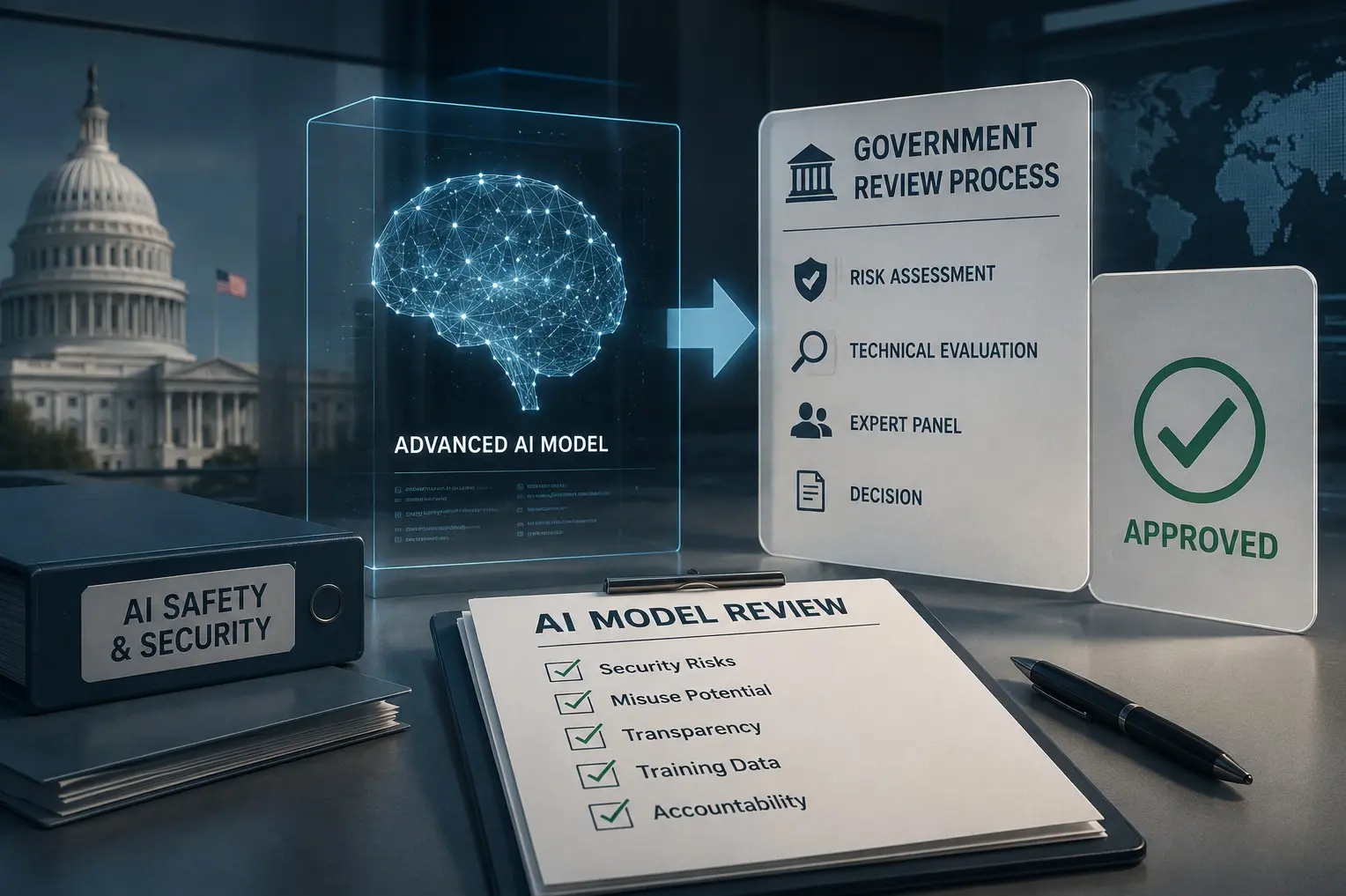

Several reports say the White House is considering a policy that would require government reviews for advanced AI models before they hit the public. This idea comes as worries grow about AI being used for cyberattacks, misinformation, and other security headaches. The details are still fuzzy, but the general concept is a formal review process—think of how new drugs or airplanes get checked—where certain high-risk AI models would need to pass a government review before launch.

Why now? The cybersecurity push

This isn’t coming out of nowhere. Over the past year, there’s been a clear rise in AI-driven cyber incidents, from deepfake scams to automated hacking tools. Lawmakers and security folks have been raising concerns, saying voluntary guidelines just aren’t cutting it anymore. The White House’s interest in mandatory reviews seems to reflect a growing sense that AI’s risks are now too big to leave to self-policing.

What might a review process involve?

The specifics are still being worked out, but here’s what’s reportedly on the table:

- Only the most advanced or potentially risky models would face review. Everyday chatbots and productivity tools probably wouldn’t be affected.

- Reviews would likely focus on things like security vulnerabilities, how easily the model could be misused, and how transparent companies are about their training data and model capabilities.

- It’s still unclear who would run these reviews. It could be a new agency, an expanded role for an existing one, or some kind of public-private partnership.

This isn’t just about stopping “bad” AI. The goal is also to make sure companies are thinking about the ripple effects before releasing powerful new models into the wild.

How is the industry reacting?

So far, the tech world’s response has been pretty cautious. Some leaders say a review process could help build public trust, especially as AI models get more complex and harder for outsiders to audit. Others are worried about slowing down innovation or ending up with a confusing patchwork of global rules that make it tough to launch products internationally.

It’s also worth mentioning that similar debates are happening in Europe and Asia, where governments are looking at stricter AI oversight. If the U.S. moves forward, it could set an example—assuming it finds a workable balance between safety and progress.

My take: is this needed, or just more bureaucracy?

Honestly, I think some friction is unavoidable when technology starts touching national security and public safety in such a direct way. The real challenge will be designing a process that’s fast enough to keep up with AI’s pace, but thorough enough to catch real risks. If the White House manages that, it could become a template for other countries. If not, we might just get more paperwork and not much actual protection.

For now, everyone’s watching Washington to see if these talks turn into real policy. Either way, it feels like the “move fast and break things” era for AI might finally be winding down.

Want more AI news and analysis? Subscribe or share your thoughts below—what do you think about government reviews for new AI models?