Pixverse v5.5 — Multi-Shot Video AI

Video quality is okay, but the audio is what sets it apart.

What is Pixverse 5.5?

PixVerse v5.5 - released December 1, 2025 - transforms a single prompt into short (5-10 s) video clips with native audio (music, SFX, dialogue), multi-shot camera sequencing (cuts, push-ins, shot-scale switches), and flexible resolution/aspect-ratio options.

Loading Rankings...

What's Pixverse v5.5 Actually Like?

Pixverse v5.5 does something nobody else does: multi-shot camera sequencing from a single prompt. You describe a scene and instead of one continuous camera angle, the model plans and executes multiple cuts — wide shot, close-up, reaction shot — like a little editor living inside the AI. Released December 2025.

Multi-Shot Is the Real Flex

"A detective enters a dark room, picks up a note, looks out the window." Most models: one continuous shot. Pixverse: three distinct shots with natural cuts between them. Saves hours of manual editing for storytelling content.

Thinking Mode

Turn it on for complex prompts and the model takes extra time to plan the scene before rendering. Abstract requests, tricky physics, unusual styles — Thinking Mode handles them noticeably better. Slower, but worth it when you need it.

Where Others Win

VEO 3 has longer clips (60s) and KlingAI 2.6 Pro has better environmental audio. At 10 seconds max, Pixverse clips are short. The multi-shot sequencing is what you pick this model for — if you don't need that, others may serve better.

Best For

- ✓ Short narrative content with natural cuts

- ✓ Social media storytelling

- ✓ Complex or abstract scenes (Thinking Mode)

Quick Specs

Try it at app.aitoggler.com — pay per clip, no subscription.

Image-to-Video: Bring Stills to Life

Starting Input

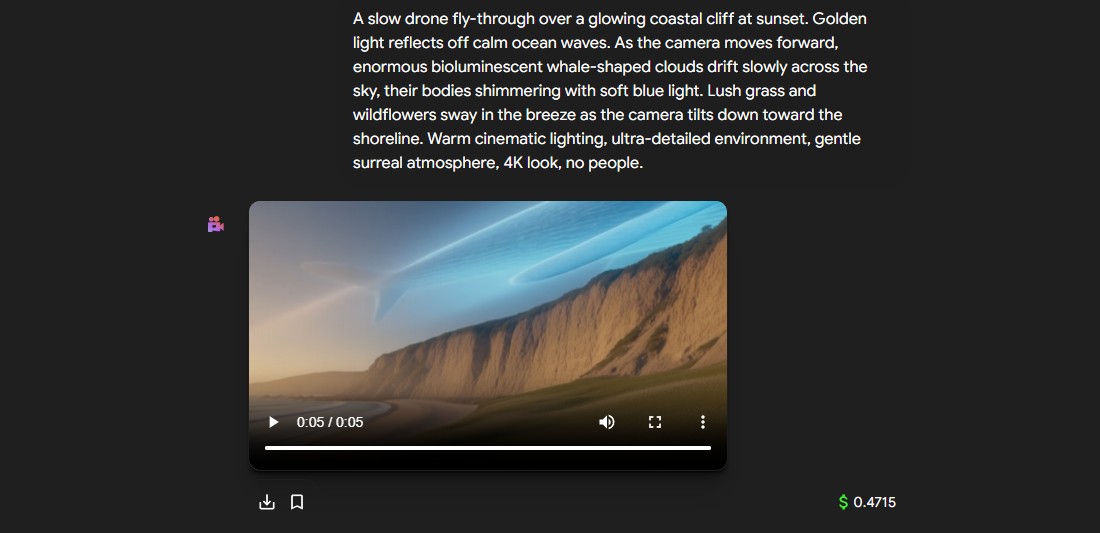

Prompt: "Zoom in slowly on the subject, dramatic rim lighting, wind blowing through hair."

Generated Video (Output)

Pixverse 5.5 applies realistic motion and complex camera movements to any still image.

Unleash Cinematic Power with Pixverse 5.5

Native Audio Generation

Generate synchronized audio, including music, sound effects, and dialogue, directly within your video generation process.

Multi-Shot Sequencing

Create complex narratives with multi-clip generation, allowing for cuts, push-ins, and shot-scale switches in a single flow.

Thinking Mode

Enable extended processing time for the model to "think" longer, resulting in significantly higher fidelity and prompt adherence.

Ready to Generate?

Start your cinematic journey now and explore the future of high-definition video creation powered by Pixverse 5.5.

Try it nowCompare With Other Models

Explore alternatives and find the best fit for your project.

Seedance 1.5 Pro

ByteDance's joint audio-video model with industry-leading lip-sync accuracy.

KlingAI 2.6 Pro

Kuaishou's cinematic model with native sound effects and physics simulation.

VEO 3

Google's 4K flagship supporting up to 60-second clips with depth-of-field control.

WAN 2.6

Alibaba's instruction-following model with strong subject consistency and long coherence.

Grok Imagine Video

xAI's spatially-aware model that understands 3D world dynamics.

Frequently Asked Questions

Pixverse v5.5 is the only model in this class that offers multi-shot camera sequencing — generating cuts, push-ins, and shot-scale switches within a single clip. Combined with native audio and a Thinking Mode for difficult prompts, it produces mini-narratives rather than single-shot clips.

You pay only the direct API cost per generation — the exact USD price appears in the model tooltip at app.aitoggler.com before you click generate. No credits, no plans, no hidden fees.

Pixverse v5.5 generates clips at up to 4K resolution with durations of 5 to 10 seconds. It supports 16:9, 9:16, and 1:1 aspect ratios.

Yes. Upload a reference image with a text prompt and Pixverse v5.5 will add motion, camera movement, and audio to bring it to life while preserving the original composition.

Thinking Mode gives the model extra processing time to plan complex scenes. It improves prompt adherence and visual quality for detailed or abstract prompts — similar to how chain-of-thought reasoning works in language models.

No. aiToggler is entirely web-based. Access Pixverse v5.5 from any browser on any device. You can install aiToggler as a PWA on your home screen for native-app speed without security risks.